Researchers

Sijie Wen

Yamin Tang

Faculty Advisor

Dr. Christian Homescu

Abstract

Reinforcement learning has been proven an efficient method and seen an increased use in the financial markets like trading and investment strategy. In Goal-Based Investing (GBI), success of investments in meeting an investor's financial goal is the success measure. Portfolio optimization for GBI can be an optimization problem within a data-driver world which can be solved with RL. A goal-based investment model is proposed for personalized retirement management implemented using RL algorithms such as Q-learning and DDPG. A better performance with significant profitability is shown with this compared to the traditional portfolio investment.

Results

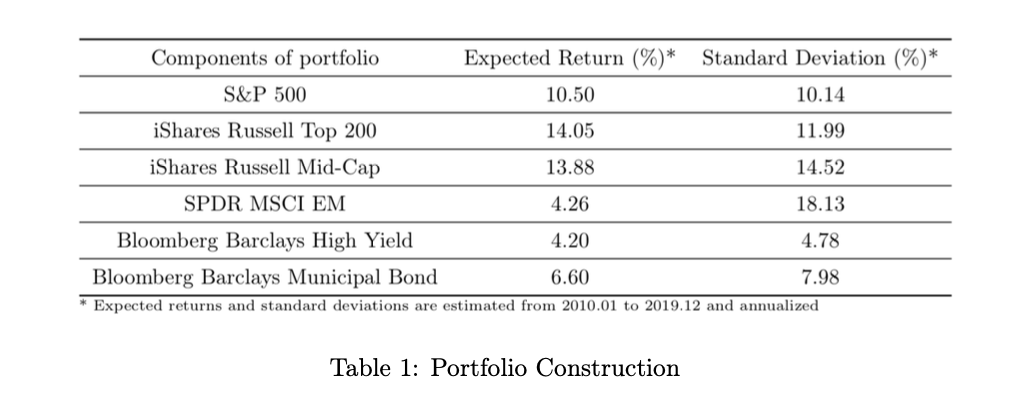

Portfolio Construction

Reinforcement learning is used in finance for trading and investment. Goal-Based Investing (GBI) measures success by meeting an investor's financial goal. RL solves GBI's portfolio optimization problems. RL algorithms (Q-learning, DDPG) are proposed for personalized retirement management, with better performance and significant profitability than traditional portfolios.

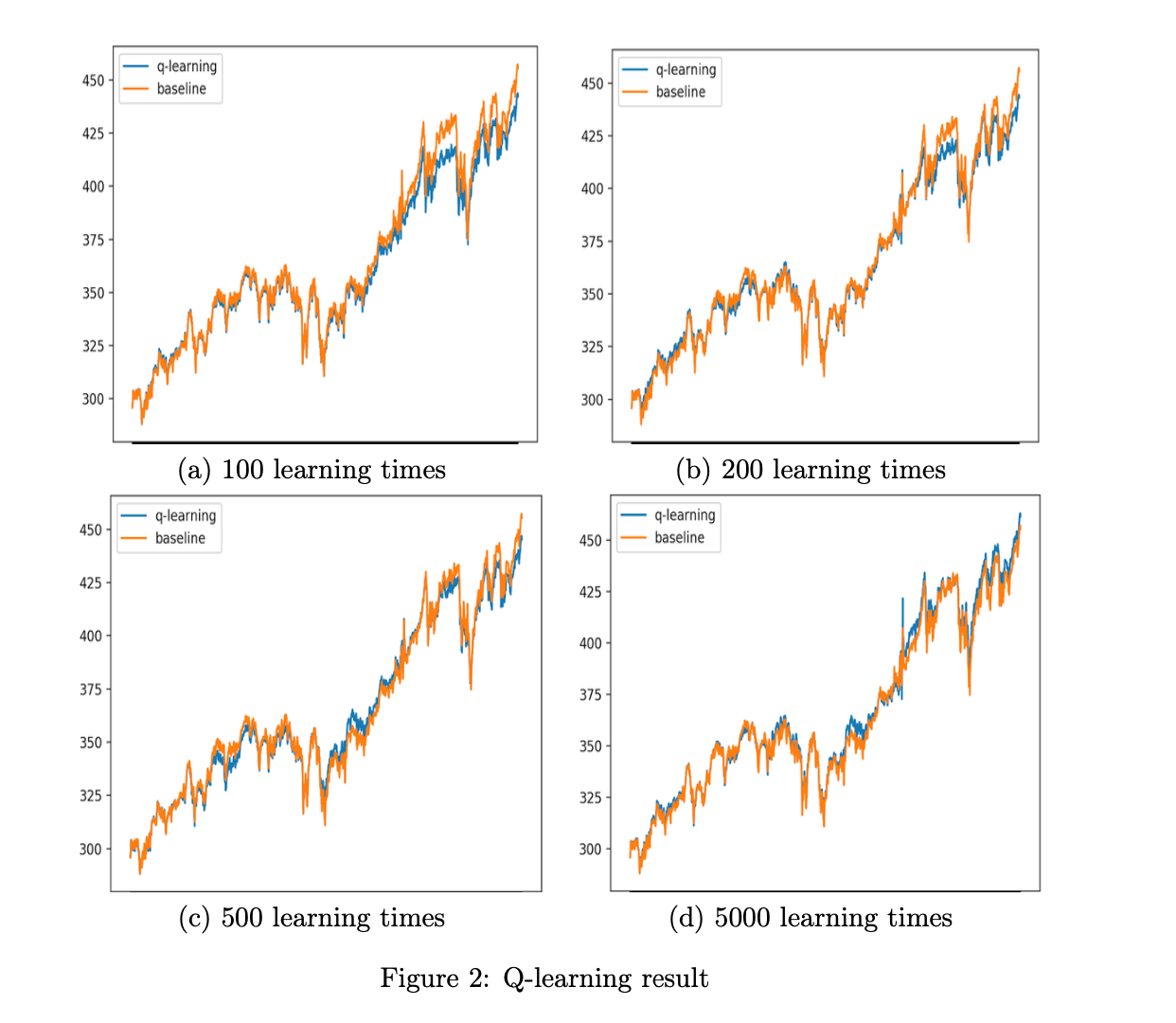

Q-learning Algorithm

Based on the plots below, we can see the Q-learning portfolio is not better than the orange baseline even when the number of learning iterations is increased to 5000. Thus, the Q-learning algorithm is not apt in the GBI portfolio.

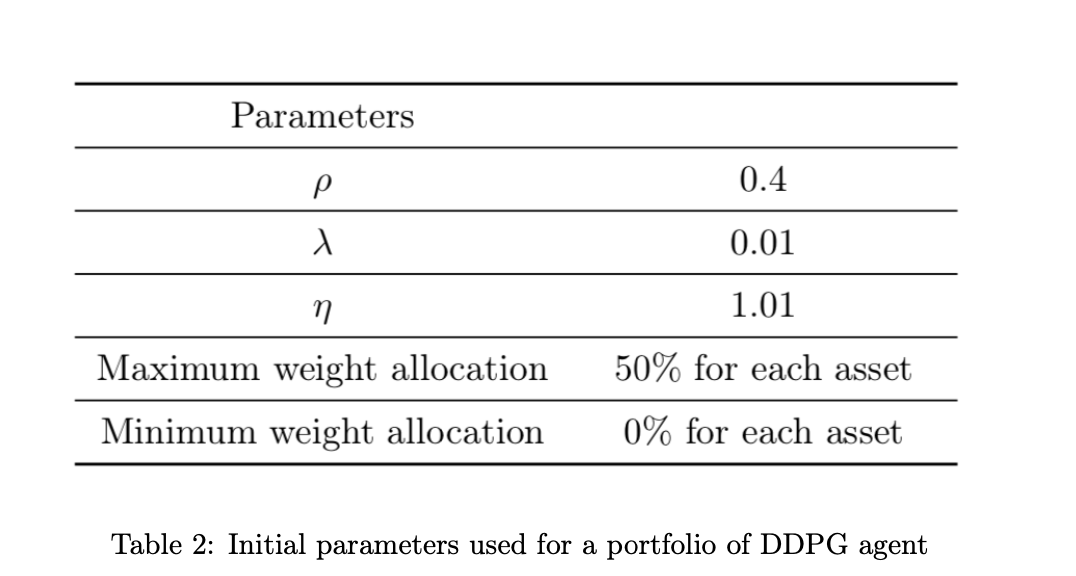

DDPG Algorithm

The parameters set for DDPG agents are as below:

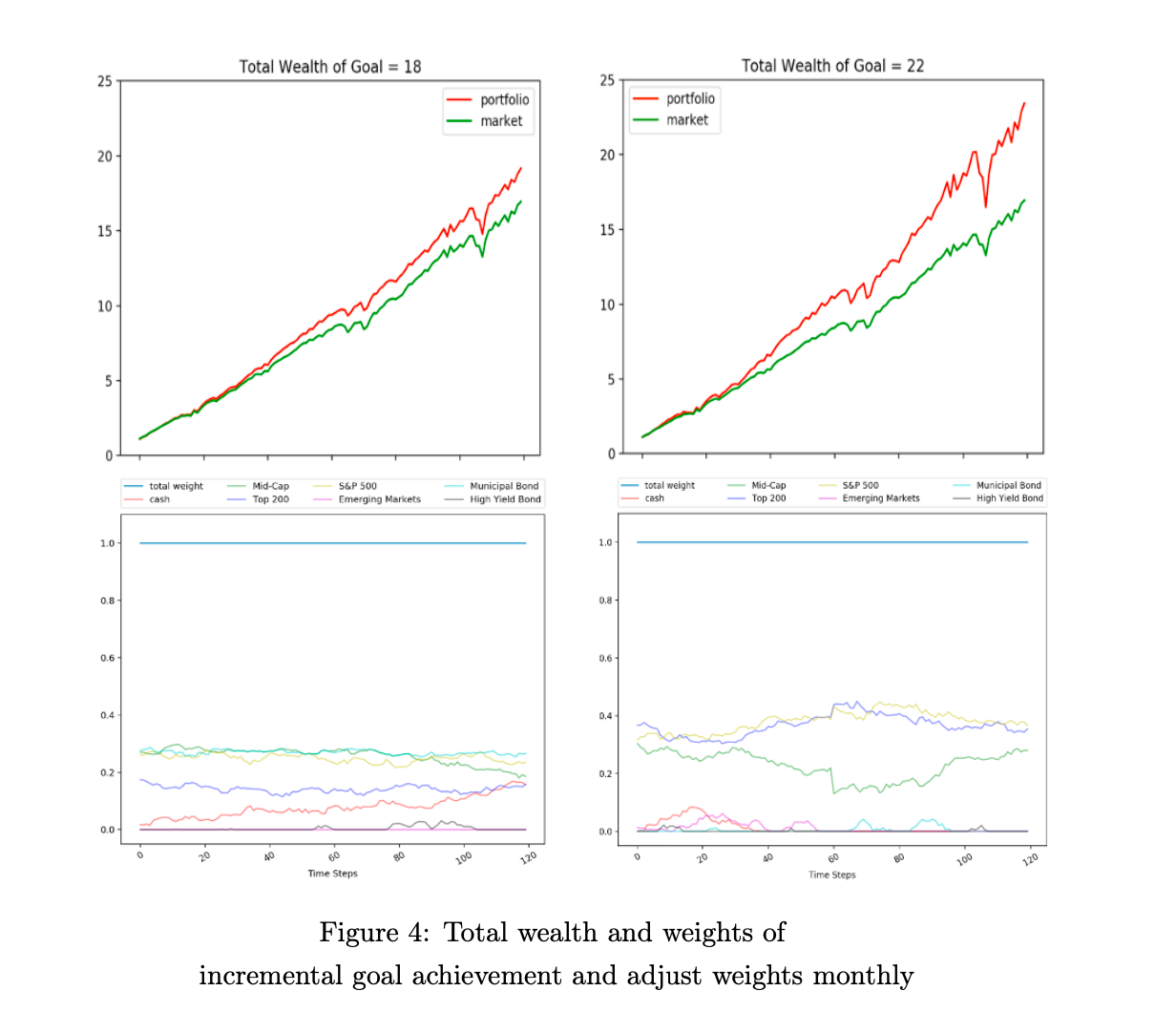

The initial goal is set to 18 and it is observed that the model continues to invest in safe assets in all stages. If the goal was further improved to 22, the model is forced to allocate more of its portfolio to riskier assets, thus reducing its holdings of risk-free assets like cash.

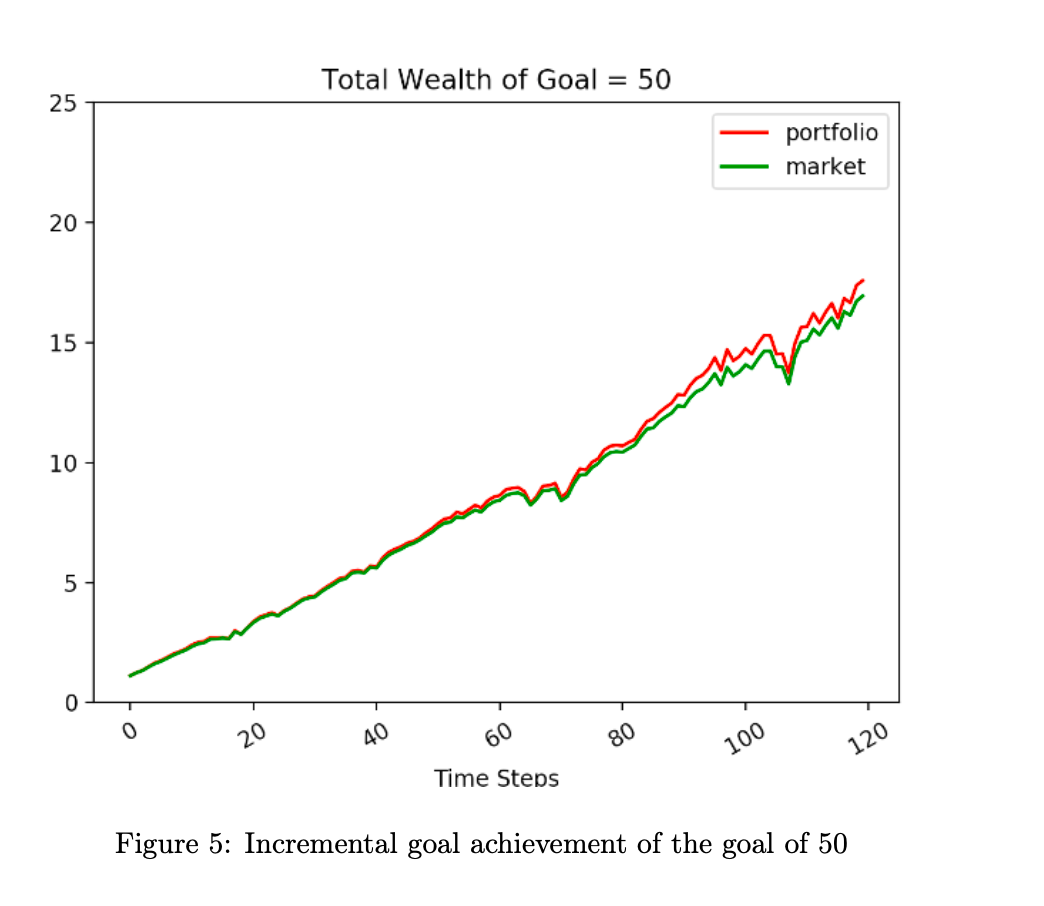

Another experiment was done to increase the goal more drastically to 50. Such a highly aggressive goal is extremely tough to achieve. The learning effect is not significant with such a wrong reward so it is even worse than a smaller goal. Hence, this model fails.

Conclusion

In this study, a goal-based approach to retirement optimization using reinforcement learning (RL) is proposed. Two RL algorithms, Q-learning and DDPG, are utilized to optimize the portfolio of a capital-preservation client with a clear goal of achieving a certain level of wealth at the end of the investment horizon. Constraints are set to prevent aggressive investment strategies that could be detrimental to retirement goals. The study shows that the DDPG algorithm outperforms conventional asset allocation strategies. The approach requires only inputs of initial wealth, future investment, and end-of-horizon goals, making it suitable for automated financial advising services, such as robo-advisors.

However, there are areas for improvement in future research. The parameters used in the model are chosen artificially, and the numerical values may not be optimal. Using inverse reinforcement learning (IRL) to learn these parameters could improve numerical accuracy. Additionally, alternative investments, such as REITs or precious metals, are not included in the portfolio. Incorporating such assets poses challenges due to limited data availability and noisy time series data, but methods like differencing and transformation techniques or alternative RL algorithms, such as G-learning or Hindsight Experience Replay, could be explored.